We Made Our Own Website AI-Agent-Ready. Here's What That Means.

- Curtis McEwen

- Apr 18

- 3 min read

Updated: 2 days ago

A few weeks ago, we published a post about AI Search Optimization and why contractors should care about how AI tools find and represent their businesses online. Today, we're following that up with something more concrete: we did the work on our own site, and we can show you exactly what changed.

What "Agent-Ready" Means

AI tools like ChatGPT, Perplexity, and Google's AI Overviews don't just crawl websites the way search bots do. A new generation of AI agents actively look for structured signals that tell them what a site does, how to access it, and whether its content can be used. When those signals are missing, the site is invisible to those agents, not because it's not indexed, but because it's not speaking the right language.

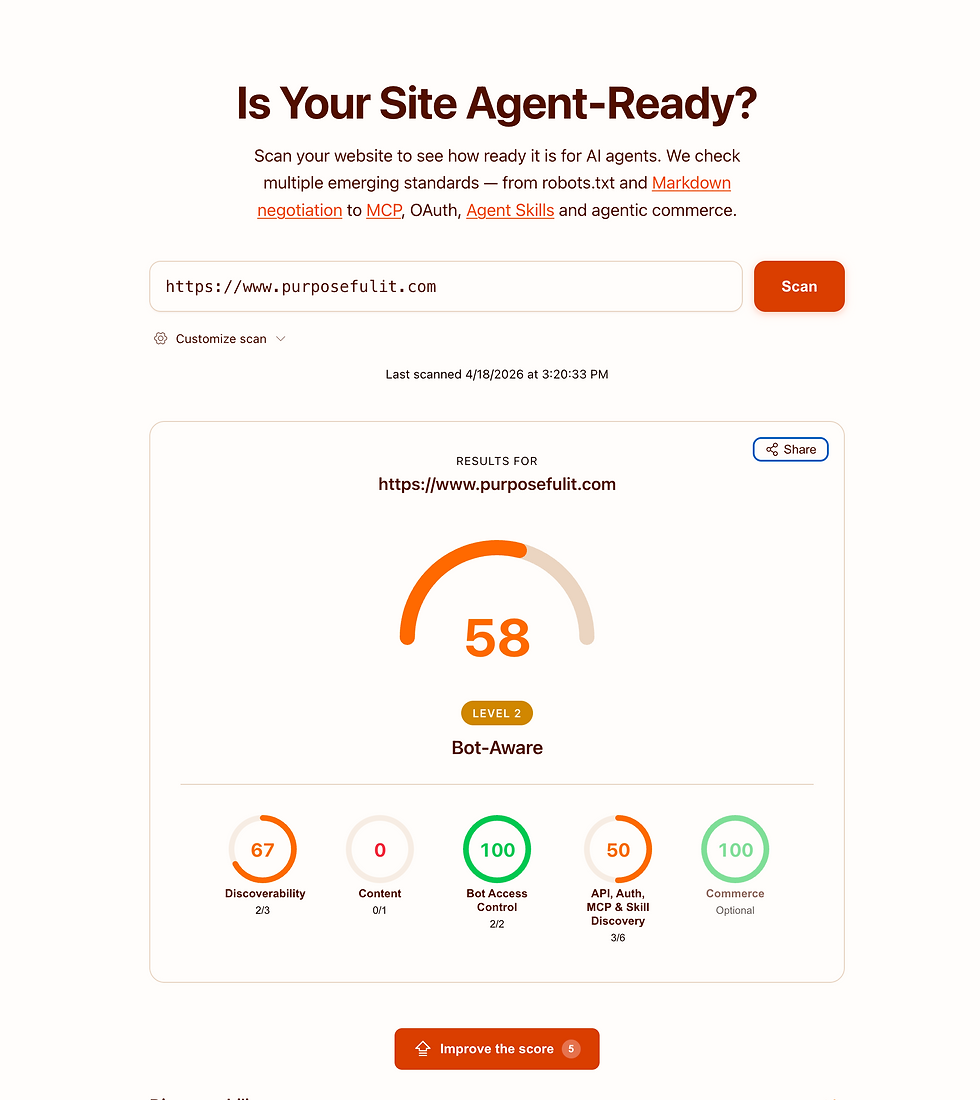

There's a tool called isitagentready.com that scores websites on this. It checks for things like agent discovery files, bot access declarations, MCP endpoints, and API catalogs. Before we made any changes, purposefulit.com scored 25 out of 100: Level 1, "Basic Web Presence."

What We Did

Everything was implemented through our hosting platform's backend scripting layer. No third-party services, no custom server infrastructure.

Here's what we added:

robots.txt Content-Signal directives. A new standard that tells AI tools explicitly how they're allowed to use your content: whether for training, search, or as input to AI responses. We declared all three as allowed, which is the right call for a business that wants to be found and cited.

API catalog at /.well-known/api-catalog. A machine-readable file that tells AI agents what resources this site exposes. Required by RFC 9727, and one of the first things discovery-capable agents look for.

MCP Server Card at /.well-known/mcp/server-card.json. Wix sites running the MCP protocol have a native endpoint at /_api/mcp. The server card is the declaration that points agents to it. Without this file, the endpoint exists but agents don't know to use it.

Agent Skills index at /.well-known/agent-skills/index.json. A discovery index that lists what skill documents this site publishes, with cryptographic hashes for verification. Ours points to the site's llms.txt, which Wix generates automatically and documents the full MCP toolset available to agents.

The redirects to make all these /.well-known/ paths work on a Wix site require a specific pattern in the URL Redirect Manager, something we've now done on multiple client sites.

The Result

After publishing the changes and rescanning, purposefulit.com moved from 25 to 58: Level 2, "Bot-Aware." Bot Access Control went from 1/2 to 2/2 (green). API, Auth, MCP and Skill Discovery went from 0/6 to 3/6.

The remaining gaps require infrastructure that our web hosting platform doesn't currently support: custom HTTP response headers for Link header discovery and markdown content negotiation. Those require Cloudflare or a proxy layer in front of the site. For most small business clients, 58 is the realistic ceiling without adding that layer.

58 is still well ahead of where most small business websites sit today, which is around 20 to 25. And that gap matters: it's the difference between a business that AI tools can discover, understand, and cite, and one that doesn't show up at all when someone asks an AI assistant for a recommendation.

We're Doing This for Every Client

Agent-readiness is now part of our standard onboarding for new clients. Every site we manage gets the same backend setup, robots.txt configuration, and discovery files.

If you want to know where your site stands, run it through isitagentready.com. If your score is below 50, there's real work to do, and we can help. Before any of this, your site has to actually rank. Here's what to fix first.